The Four Eras of Art:

A Brief History of Art throughout the ages

After learning there would be two projects for the semester, I knew I wanted them to tie-in together. Whether that would be through a theme or a technique, I wanted the two projects to be a cohesive statement of work. In the end, I really wanted each project to be a true companion piece to each other.

The first project dealt with the many museums around the world (based on size). For the second idea, which included an interactive element, I was either exploring the different artists around the world or art history in general. I eventually chose the art history route. This report documents the development and processes used to create this interactive tool— to help users understand the great artistic achievements humans have made in our history.

Defining the Project

Doing an interactive globe of the various museums around the world for the first project was not only challenging but super rewarding. For the second project, I wanted to explore various artists around the world through a physical globe, but it seemed too similar to the previous project. So, I decided to focus on art history since it tied more into the museum theme.

While researching online, I came across an old blog by Alex Lubbock. In it, Lubbock spoke about a button driven museum display using a Raspberry Pi. I thought this really coincided with my concept. I saw the Python code, the video player he used and the 3D files used to create the four button box. As I was examining the 3D file, I thought it would be great to turn those buttons into triggers for a page turn—like one was turning pages of a history book! It was at that instance I knew what my interactive aspect of the project would be—a book using its pages to electronically learn about the different periods of art.

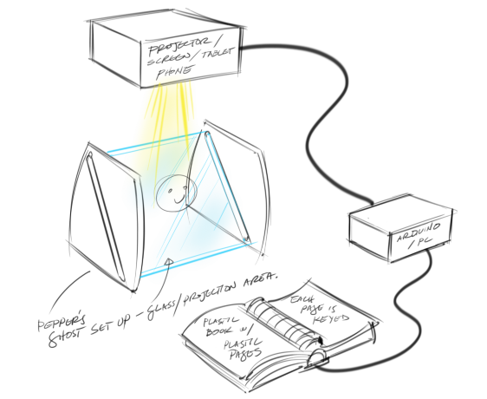

During my exploration, I came across different ways of displaying a video which included a method using a contraption called a Pepper’s ghost. Through the use of clear glass/acetate, an image can be reflected on the glass appearing holographic. I thought this would be a great way to present great pieces of art. I also thought, that revolving the artworks in 3D would also heighten the visual experience—and I haven’t seen anything like this done before.

Knowing that this was interactive component, I had to create some kind of video that went with the different aspects of art history. That’s when I stumbled a bit. I realized there were so many periods in art—how do I narrow it down to a limited number of sections, specifically four, and represent all of art history with those choices? I went back online trying to find a solution to this, when I came across an article that mentioned that there are four main eras concerning the history of art: Artisan Era (prehistoric to 17th Century art); Romantic Era (18th to 20th Century Art); Modern Era (19th to Mid-20th Century Art); Contemporary Era (Mid-20th Century to Present Day Art).

After establishing the four art eras, I had to find art pieces that represented each section. I decided ten pieces per era was suffice—not too much and not too little. Plus, I didn’t want these videos to be too long and taxing—more informative and straight to the point. So, I collected not only images of these great master pieces, I tried to find 3D files for frames that somewhat represented how the art is framed/displayed currently in museums. After finding resources for some frames and building some frames myself, I was set to start making the videos.

Completed First Project for Emerging Media

Inspiration from Lubbock’s button driven Raspberry Pi

3D Frame for Blue Boy in Rhino 3D

Using ChatGPT to help write scripts for videos

Pepper’s Ghost reference

Preliminary sketch for 2nd Project Proposal for Emerging Media

Balloon Dog being modified in Rhino 3D

Animating Mona Lisa painting & frame in Keyshot

Using Voicemaker to add a narrator to videos

Using Adobe Premier Pro to put all the animations together in four separate videos

Developing the Videos

Using Rhinoceros 3D, I created CAD files of each of the pieces along with their frames. Because most of the pieces were painted on canvases, the 3D aspect of canvases themselves weren’t so interesting. With the addition of the frames, it gave a greater sense of dimension to the images. I also looked at thingiverse.com to find free existing frames and sculptures. In some cases, I had to purchase the 3D files—Like the one based on Jeff Koon’s Balloon Dog.

After, the creation of the 3D files, I used another program called KeyShot. KeyShot is a rendering program that allows me to add color, texture and animation to a 3D file. Once I recreate a piece in Keyshot, I then use its animation tool. In this case I’m animating the art object to animate as if it were on a turntable, spinning 360 degrees—providing a dynamic movement. After experimenting a few times, I realized, that seeing the back of the painting wasn’t very interesting. So, I took artistic liberties and made all the pieces (except the sculptures) double facing. To my surprise, it worked out well.

All forty pieces when through the turntable animation sequence. Just to do 10 seconds of animation took about half an hour or more depending on how intricate the frames were. The Mona Lisa, because of the intricacy of it’s frame, took about 4 hours to do 10 seconds of animation. Regardless, everything looked great and all I needed now was to put everything together.

Because I’m considering this as an educational tool, I wanted to have a narrator go through the different aspects of each period. With the help of ChatGPT, I created a scripts for each of the art eras. I also used an online AI service to help with the voice, called Voicemaker. With Voicemaker, I was able to find an AI voice that went well with the videos—very PBS documentary style sounding. .

I took all the forty short animated clips over to Adobe Premier Pro, which is a video editing program and begun to make the four videos based on the different eras. I added titled pages and citations to each of the pieces. Another task was flipping the videos horizontally, so it would reflect correctly on the Pepper’s Ghost. Once I had strung everything together I added the narrated audio portion.

Designing of the Book

Before I could really start working on the book, I needed to acquire the materials needed to use it as a controller. I purchased an arcade button set (of which I needed four) and a Raspberry Pi. From my research, I knew I just needed a Raspberry Pi 3—which is enough to do my videos. I didn’t need one of the latest models. From there, I measured the components and was ready to start designing the book.

Quick insight between computers. Even though the Lubbock example used a Raspberry Pi, I wasn’t too sure if that was the direction I wanted to go with. After doing a bit more research between the Raspberry Pi vs an Arduino, I eventually went with the Raspberry Pi. From what I read in regards to videos, the Arduino can’t handle higher resolutions videos. Videos are one of the major components to this project—so, the Raspberry Pi won out.

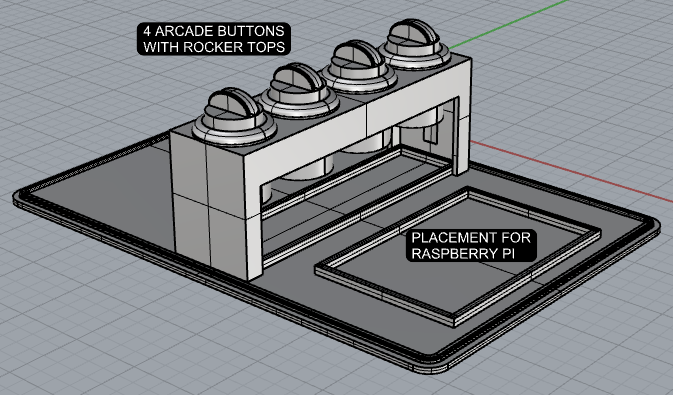

Using Rhinoceros 3D once again, I designed the interactive book. The challenge for this part of the project was figuring out how the pages interacted with the buttons. I looked at a couple of different methods from using internal gears and cams to trigger the buttons. I eventually landed on doing an internal cam and creating a rocker cap for the existing button. So I modeled that up in 3D along with the pages and the housing. I also took into consideration the size of the raspberry pi and the four arcade buttons—so everything fit nicely in the book unit.

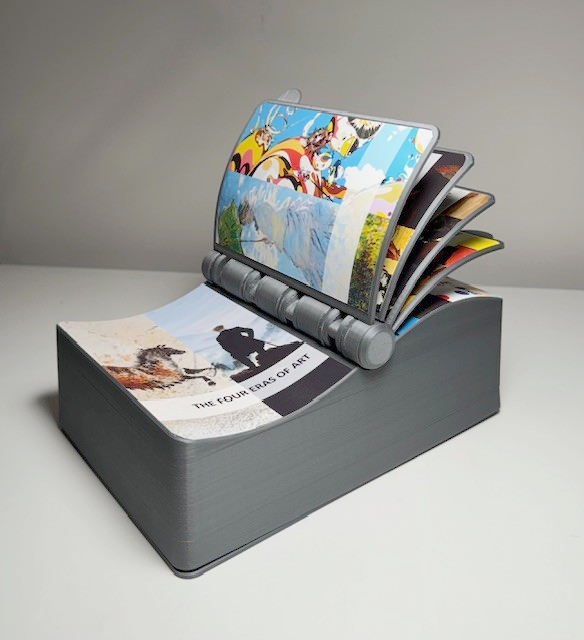

Next I designed the pages for each of the eras. I decided to take sections of the some of the major works I’ve chosen and put them together as a simple collage. Overall it’s simple and gets the point across.

Raspberry Pi 3 from Amazon.com

Book control unit

Book bottom plate showing placements for arcade buttons , rocker caps, and Raspberry Pi

Printing of the Book

After completing the CAD of the book controller, it was time to start printing it. With all 3D files, it’s always good practice to separate all the pieces into separate files. That way one can organize the pieces better on the splicer to maximize the printing area. The splicer is the program used to take your 3D files and convert them into gcode so it can be 3D printed. The program I use for this is called QIDISlicer. It’s a splicer program that came with my 3D printer, QIDI X-Plus 3.

To help expedite the printing of the book controller, I did use an additional printer, QIDI S i-mate, my back up printer.

Arcade button kit from Amazon.com

Book control unit exploded view

Demonstrating how turning the book, the internal cam for the first page hits the rocker cap to trigger the arcade button.

Preparing 3D files to be printed on slicer

Prototype of Pepper’s Ghost

16” Cintiq monitor

Pepper’s Ghost build in Rhino 3D

My main 3D printer, QiDi X-Plus 3

Bottom plate with components attached: Raspberry pi and four arcade buttons with rocker caps.

Artwork for pages in book

Pepper’s Ghost proof of concept test

Final Pepper’s Ghost build size vs. prototype

CanaKit GPIO reference card

Wire set up for the arcade buttons to the Raspberry Pi

My back-up 3D printer, QIDI S i-mate

Pages being printed on back-up 3D printer

Book controller with artwork

Artwork for the Book

Coming up with artwork for the book controller was a bit of a challenge at first. I wasn’t too sure what to use to give an idea of passing through time through the ages. Plus, thinking about doing that treatment for the front cover, but for all the four eras as well felt daunting. I actually did ponder on this for quite some time. Then I came up with the idea of using the pieces I have for each era and choosing a few of them to represent the era for that particular page. I decided to do cropped snapshot strips taken from a few of the pieces and put them together—giving a quick overview of of the feel for the art of that era. Surprisingly, it seemed to work okay.

Making the Pepper’s Ghost

I really liked the idea of using a Pepper’s Ghost. It’s a unique way of presenting this concept. I always enjoyed the holographic appearance that this illusion provides—I also like that’s its a technique from the 1800s. Plus, I think combining old tech with new tech is so cool!

Before I started experimenting with the Pepper’s Ghost, I looked at a lot of videos online to see which set up would be best for what I was trying to achieve. In the end, there were two choices—having the video source on the top or on the bottom. Both versions looked good—but then I started to think about the original intention of the Pepper’s Ghost—and it’s an illusion. Meaning, you don’t want to see where the source image/material is coming from. So I decided to go with the video source coming from the top—I didn’t want to ruin the illusion. It is magic after all.

To make things easier on myself, I decided to buid the Pepper’s Ghost out of foamcore (foam board). It’s a light weight material that can easily be cut and I could just use a hot glue gun to assemble it. Since the prototype was using an iphone as it’s source, I wanted a larger screen to work with. Luckily I have a 16” Cintiq monitor that is light weigh, portable, and easy to set up . To build the Pepper’s Ghost to the size of the Cintiq, I turned again to my trusty Rhino 3D program. I built it in 3D to compensate for the thickness of the foamcore. When I was doing the prototype, I had to make few tweaks because I wasn’t taking into account the thickness of the board—and I didn’t want to deal those issue in the final Pepper’s Ghost—I wanted a clean build.

Note: When I filmed the video for the final, I did have two types of reflective material—an acrylic sheet and a glass sheet. Both worked fine, but I started to see an issue as I was filming. Although both the glass and the acrylic sheet were fairly thin (roughly 1.80mm), they were thick enough to create a double reflection—basically the same reflection of an image of both sides of the acrylic sheet and glass. This bothered me even after the final filming. So I did a bit more research and found an acetate sheet that was 0.07mm thick. When I got the acetate sheet in and tried it, the double reflection was gone! If there were a double reflection, the sheet is so thin that its too difficult to distinguish. I am planning to film one more time using the acetate sheet.

Setting up the Raspberry Pi

Before I could start programing, I needed to set up the Raspberry PI with the arcade buttons—so I could test the code. Using the GPIO reference card that came with the Pi, I was able to wire up the buttons. In order to have the arcade buttons work, it needed to connect to one GPIO, ground and voltage pin. Setting up the first three buttons wasn’t an issue. But the fourth button was giving me some trouble. Even though I chose a free GPIO, ground and voltage pin, I some how kept on short circuiting the Raspberry Pi. I don’t know how many combinations of pins I tried, but after a while I found a combination that didn’t short the computer.

Programing with Python

I could not have done this project without the help of ChatGPT. It was integral to the usage of Python coding—to which I’m very grateful.

Before I get into Python, I want to refer back to one of the inspirations of this project, Alex Lubbock’s “Button Driven Raspberry Pi” blog. In it he used a video program called OMXPlayer. That program is officially defunct. But I still wanted to use it—Lubbock had the Python codes for it. So I managed to get OMXPLayer through GitHub and tried to run it—but couldn’t. Eventually I gave up—and all signs pointed to VLC. The reason I was so hesitant to go with VLC is because I didn’t have code for it. But thankfully, I was reminded through my partner, I do have ChatGPT—and that was the start of a bright blossoming relationship!

After playing around with the codes with ChatGPT and input from Professor Miller, I eventually got the output that I wanted: With a press of a button, I can play a video through VLC and will display it fullscreen. Through mid play, it can be interrupted by another press of a button to start a new video. To the right is the full Python script. One of the lines ofscript is long, but if you copy and paste it, the full code will be there.

import RPi.GPIO as GPIO

import subprocess

import time

# Set GPIO mode

GPIO.setmode(GPIO.BCM)

# Define GPIO pins for the arcade buttons

button_pins = [4, 10, 16, 26]

# Define video paths

video_paths ={

4: "/home/machinerobo/Desktop/contemporary_era_update.mp4",

10: "/home/machinerobo/Desktop/romantic_era_update.mp4",

16: "/home/machinerobo/Desktop/modern_era_update.mp4",

26: "/home/machinerobo/Desktop/artisan_era_update.mp4"

}

# Define analog audio device

analog_audio_device = "hw:0,0"

# Setup button pins as input with pull-down resistor

for pin in button_pins:

GPIO.setup(pin, GPIO.IN, pull_up_down=GPIO.PUD_DOWN)

# Variable to store the VLC process

vlc_process = None

try:

while True:

for pin in button_pins:

# Check button state

if GPIO.input(pin) == GPIO.HIGH:

print(f"button {pin} pressed")

# Get video path for the pressed button

video_path = video_paths.get(pin)

if video_path:

# Kill the previous VLC process if it exists

if vlc_process:

vlc_process.terminate() # Terminate the VLC process

# Launch VLC to play video with analog audio output and full screen

vlc_process = subprocess.Popen(["vlc", video_path, "--aout=alsa", "--alsa-audio-device=" + analog_audio_device, "--fullscreen"])

# Wait for button release

while GPIO.input(pin) == GPIO.HIGH:

time.sleep(0.1)

# Delay to avoid multiple button presses

time.sleep(0.5)

except KeyboardInterrupt:

# Cleanup GPIO

GPIO.cleanup()

# Terminate the VLC process if it exists

if vlc_process:

vlc_process.terminate()

Video: The Four Eras of Art

The following video is the new and improved video.